DeepSeek, a Chinese AI company, has recently unveiled its cutting-edge AI model, DeepSeek V3, positioning itself as a formidable competitor to industry giants like OpenAI’s ChatGPT and Google’s Gemini.

The newly released model, now accessible on GitHub, employs a Mixture-of-Experts (MoE) architecture that boasts an impressive 671 billion total parameters. However, for each token processed, only 37 billion of these parameters are activated. This represents a substantial upgrade from its predecessor, V2, which contained 236 billion total parameters, with 21 billion active during the inference process.This architectural advancement not only showcases a significant increase in the model’s overall parameter count but also demonstrates a more efficient use of computational resources during operation. The MoE approach allows for a larger, more capable model while maintaining manageable computational requirements for each individual inference task.

The model boasts an impressive Mixture-of-Experts (MoE) architecture with 671 billion total parameters, of which only 37 billion are activated per token.

- Efficient Performance: Three times faster inference compared to its predecessor

- Cost-Effectiveness: Significantly cheaper than rival models

- Open-Source Availability: Released under a permissive license that allows developers to download and modify it

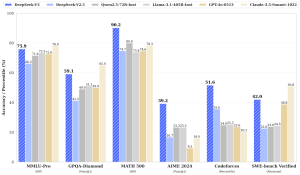

DeepSeek V3 has demonstrated remarkable capabilities across various domains:

- Coding: Outperforms models like Meta’s Llama 3.1, OpenAI’s GPT-4o, and Alibaba’s Qwen 2.5

- Benchmark Scores:

- 88.5 on MMLU benchmark

- 91.6 on DROP benchmark

- Ranks in the top 10 of the ChatBot Arena

The model excels in tasks such as coding, translation, and writing, making it a versatile and powerful AI solution that challenges the dominance of established AI providers.

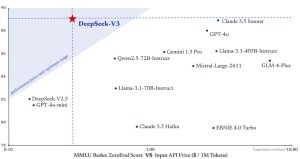

The release of DeepSeek V3 has had far-reaching consequences. Tech stocks experienced significant volatility, with industry leader Nvidia seeing a staggering $600 billion reduction in market value. This seismic shift has sparked intense competition and accelerated technological advancement, particularly in natural language processing, coding, and multi-modal AI capabilities.In the Chinese tech ecosystem, DeepSeek’s emergence has triggered a price war. Giants like Alibaba, Baidu, and Tencent have been compelled to lower their API pricing and improve model performance. DeepSeek’s competitive pricing of just $0.55 per million input tokens has been a significant disruptive factor.

- DeepSeek V3: A general-purpose model with 671 billion parameters, designed for multitasking across various domains.

- DeepSeek Coder: Tailored for programming tasks, with versions ranging from 1.3B to 33B parameters.

- DeepSeek R1: Focused on logical reasoning and problem-solving, available in sizes from 1.5B to 70B parameters.

These models are accessible through both an API and free local use options, providing flexibility for developers and researchers. DeepSeek’s commitment to open-source development has positioned it as a significant player in the AI landscape, challenging established competitors with cost-effective and efficient models.